Models designed to forecast events that can be classed this way should be able to distinguish the one from the other in some useful way. Exactly what constitutes “useful,” though, will depends on the nature of the forecasting problem.

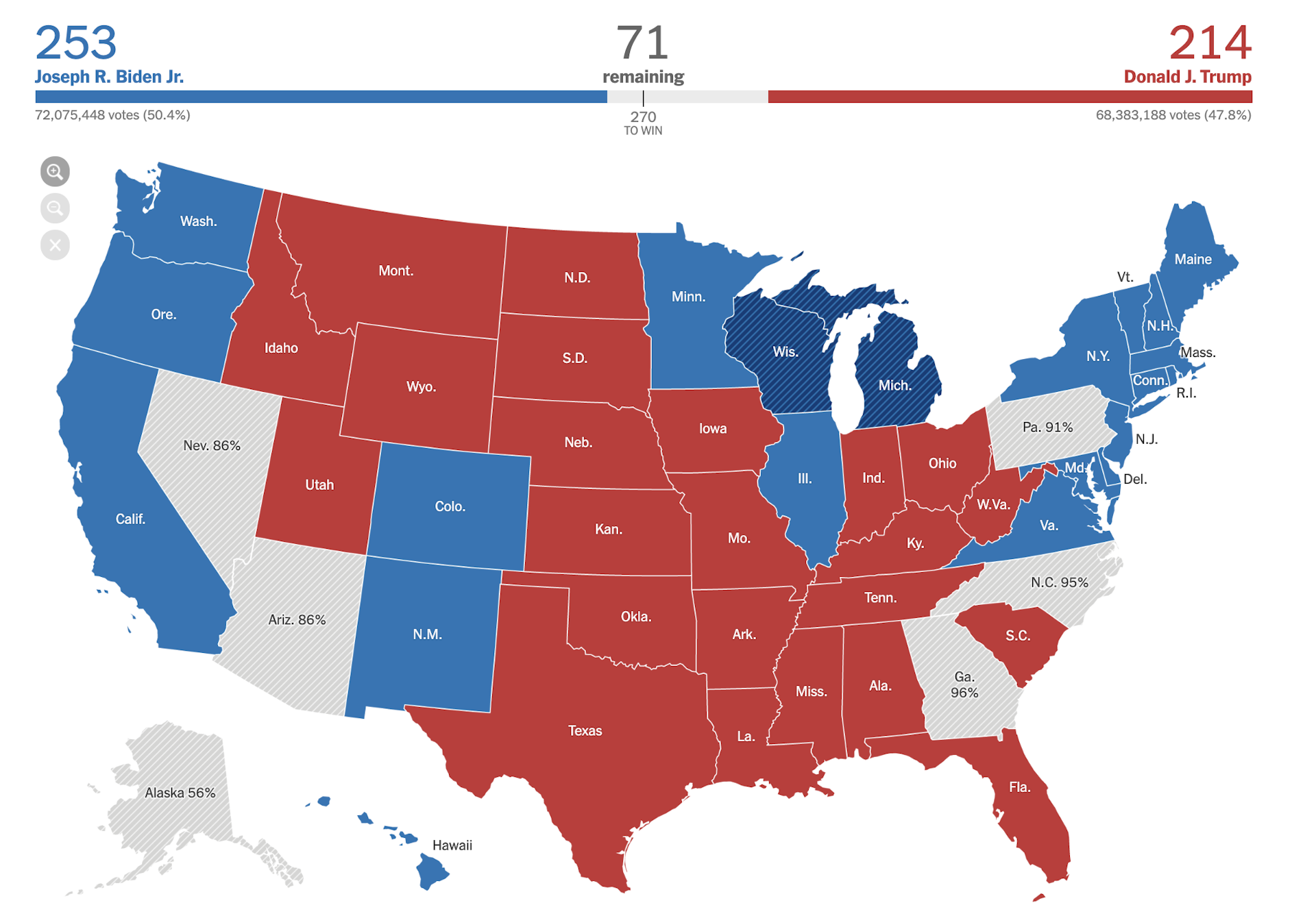

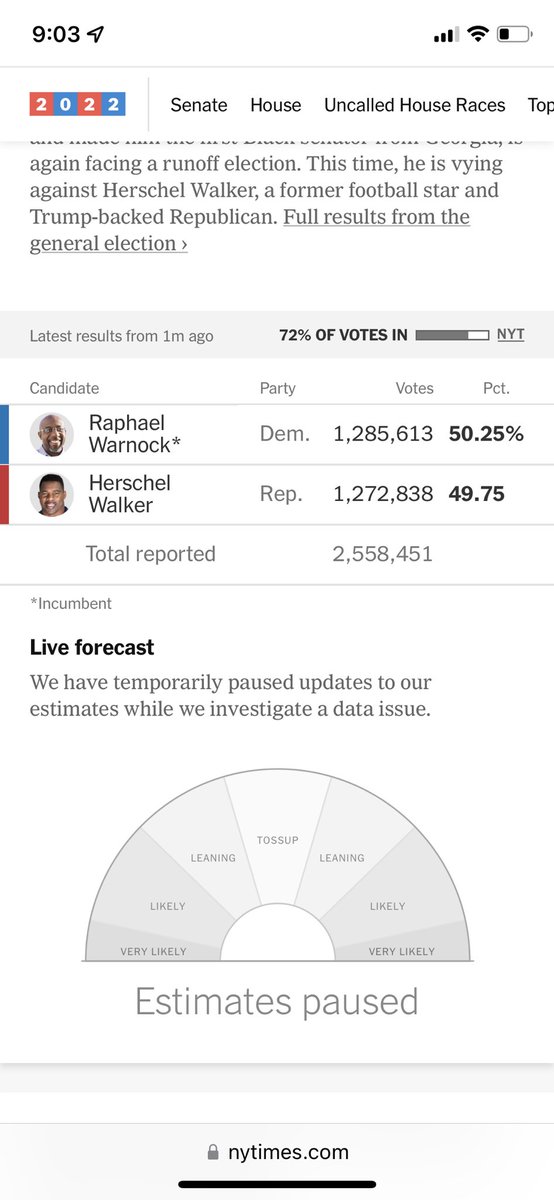

Discrimination refers to a model’s ability to distinguish accurately between cases in different categories: heads or tails, incumbent or challenger, coup or no coup. So, what kinds of errors should we look for? Statistical forecasters draw a helpful distinction between discrimination and calibration. But we can still assess whether the errors in the model estimates were small enough to warrant confidence in that model, and make its application useful and worthwhile. It’s not realistic to expect any model to get exactly the right answer-the world is just too noisy, and the data are too sparse and (sadly) too low quality. The world is inherently uncertain, and sound forecasts will reflect that uncertainty instead of pretending to eliminate it. The important point here is that these forecasts are probabilities, not absolutes, and we really ought to evaluate them as such. So when is a forecast like Silver’s or Linzer’s wrong? If a meteorologist says there’s a 20 percent chance of rain, and it rains, was he or she wrong? If an analyst tells you there probably won’t be a revolution in Tunisia this year and then there is one, was that a “miss”? Over at Votamatic, Drew Linzer’s model gives Obama a stronger chance of re-election-better than 95 percent-but even that estimate doesn’t entirely eliminate the possibility of a Romney win. As of today, his model of the presidential contest pegs Obama’s chances of re-election at about 70 percent-not exactly a toss-up, but hardly a done deal, either. Silver won’t try to call the race one way or the other instead, he’ll estimate the probabilities of all possible outcomes. Or, if I say Obama will win in November but Romney does, I was obviously wrong.īut here’s the thing: few forecasters worth their salt will make that kind of unambiguous prediction.

For example, if I predict that a flipped coin is going to come up heads but it lands on tails, my forecast was incorrect.

What caught my mind’s eye in Hounshell’s tweet, though, was what it suggested about how we conventionally assess a forecast’s accuracy. The question at the head of this post seems easy enough to answer: a forecast is wrong when it says one thing is going to happen and then something else does. Silver uses a set of statistical models to produce daily forecasts of the outcome of the upcoming presidential and Congressional elections, and I suspect that Hounshell was primarily interested in how the accuracy of those forecasts might solidify or diminish Silver’s deservedly stellar reputation. Silver writes the widely read FiveThirtyEight blog for the New York Times and is the closest thing to a celebrity that statistical forecasting of politics has ever produced. This topic came up a few days ago when Foreign Policy managing editor Blake Hounshell tweeted: “Fill in the blank: If Nate Silver calls this election wrong, _.”

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed